Course content

- Practical Reinforcement Learning – Agents and Environments

- Advanced Practical Reinforcement Learning

- Hands-On Deep Q-Learning

- Reinforcement Learning with TensorFlow & TRFL

21 hours (usually 3 days including breaks)

Audience

Reinforcement Learning (RL) is a machine learning technique in which a computer program (agent) learns to behave in an environment by performing the actions and receiving feedback on the results of the actions. For each good action, the agent receives positive feedback, and for each bad action, the agent receives negative feedback (penalty).

This instructor-led, live training (online or onsite) is aimed at data scientists who wish to go beyond traditional machine learning approaches to teach a computer program to figure out things (solve problems) without the use of labeled data and big data sets.

By the end of this training, participants will be able to:

Format of the Course

Course Customization Options

Introduction

Elements of Reinforcement Learning

Important Terms (Actions, States, Rewards, Policy, Value, Q-Value, etc.)

Overview of Tabular Solutions Methods

Creating a Software Agent

Understanding Value-based, Policy-based, and Model-based Approaches

Working with the Markov Decision Process (MDP)

How Policies Define an Agent’s Way of Behaving

Using Monte Carlo Methods

Temporal-Difference Learning

n-step Bootstrapping

Approximate Solution Methods

On-policy Prediction with Approximation

On-policy Control with Approximation

Off-policy Methods with Approximation

Understanding Eligibility Traces

Using Policy Gradient Methods

Summary and Conclusion

21 hours (usually 3 days including breaks)

Audience

Reinforcement Learning (RL) is an area of AI (Artificial Intelligence) used to build autonomous systems (e.e., an “agent”) that learn by interacting with their environment in order to solve a problem. RL has applications in areas such as robotics, gaming, consumer modeling, healthcare, supply chain management, and more.

This instructor-led, live training (online or onsite) is aimed at data scientists who wish to create and deploy a Reinforcement Learning system, capable of making decisions and solving real-world problems within an organization.

By the end of this training, participants will be able to:

Format of the Course

Course Customization Options

Introduction

Understanding Adaptive Learning Systems and Artificial Intelligence (AI).

How Agents Perceive State

How to Reward an Agent

Case Study: Interacting with Website Visitors

Preparing the Environment for the Agent

Deep Dive into Reinforcement Learning Algorithms

Value-Based Methods vs Policy-Based Methods

Choosing a Reinforcement Learning Model

Using the Q-Learning Model-Free Reinforcement Learning Algorithm

Designing the Agent

Case Study: Smart Assistants

Interfacing the Agent to a Production Environment

Measuring the Results of Agent Actions

Troubleshooting

Summary and Conclusion

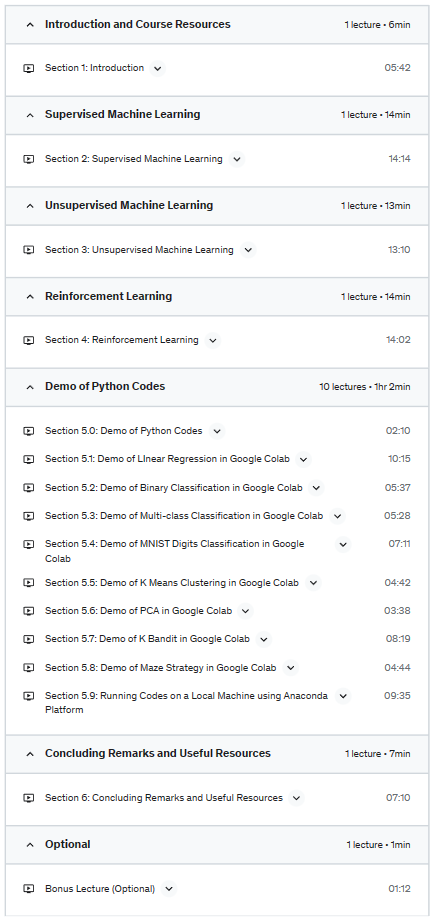

Overview of Supervised, Unsupervised, and Reinforcement Learning

Course Outcome:

Learners completing this course will be able to give definitions and explain the types of problems that can be solved by the 3 broad areas of machine learning: Supervised, Unsupervised, and Reinforcement Learning.

Course Topics and Approach:

This course gives a gentle introduction to the 3 broad areas of machine learning: Supervised, Unsupervised, and Reinforcement Learning. The goal is to explain the key ideas using examples with many plots and animations and little math, so that the material can be accessed by a wide range of learners. The lectures are supplemented by Python demos, which show machine learning in action. Learners are encouraged to experiment with the course demo codes. Additionally, information about machine learning resources is provided, including sources of data and publicly available software packages.

Course Audience:

This course has been designed for ALL LEARNERS!!!

Teaching Style and Resources:

Python Demos:

There are several options for running the Python demos:

2021.09.28 Update

This course provides an introduction to basic computational methods for understanding what nervous systems do and for determining how they function. We will explore the computational principles governing various aspects of vision, sensory-motor control, learning, and memory. Specific topics that will be covered include representation of information by spiking neurons, processing of information in neural networks, and algorithms for adaptation and learning. We will make use of Matlab/Octave/Python demonstrations and exercises to gain a deeper understanding of concepts and methods introduced in the course. The course is primarily aimed at third- or fourth-year undergraduates and beginning graduate students, as well as professionals and distance learners interested in learning how the brain processes information.

Content Rating95%(8,784 ratings)

WEEK1

4 hours to complete

This module includes an Introduction to Computational Neuroscience, along with a primer on Basic Neurobiology.

6 videos (Total 89 min), 6 readings, 2 quizzesSee All

WEEK2

4 hours to complete

This module introduces you to the captivating world of neural information coding. You will learn about the technologies that are used to record brain activity. We will then develop some mathematical formulations that allow us to characterize spikes from neurons as a code, at increasing levels of detail. Finally we investigate variability and noise in the brain, and how our models can accommodate them.

8 videos (Total 167 min), 3 readings, 1 quizSee All

WEEK3

3 hours to complete

In this module, we turn the question of neural encoding around and ask: can we estimate what the brain is seeing, intending, or experiencing just from its neural activity? This is the problem of neural decoding and it is playing an increasingly important role in applications such as neuroprosthetics and brain-computer interfaces, where the interface must decode a person’s movement intentions from neural activity. As a bonus for this module, you get to enjoy a guest lecture by well-known computational neuroscientist Fred Rieke.

6 videos (Total 114 min), 2 readings, 1 quizSee All

WEEK4

3 hours to complete

This module will unravel the intimate connections between the venerable field of information theory and that equally venerable object called our brain.

5 videos (Total 98 min), 2 readings, 1 quizSee All

4 hours to complete

Computing in Carbon (Adrienne Fairhall)

This module takes you into the world of biophysics of neurons, where you will meet one of the most famous mathematical models in neuroscience, the Hodgkin-Huxley model of action potential (spike) generation. We will also delve into other models of neurons and learn how to model a neuron’s structure, including those intricate branches called dendrites.7 videos (Total 114 min), 2 readings, 1 quizSee All

3 hours to complete

Computing with Networks (Rajesh Rao)

This module explores how models of neurons can be connected to create network models. The first lecture shows you how to model those remarkable connections between neurons called synapses. This lecture will leave you in the company of a simple network of integrate-and-fire neurons which follow each other or dance in synchrony. In the second lecture, you will learn about firing rate models and feedforward networks, which transform their inputs to outputs in a single “feedforward” pass. The last lecture takes you to the dynamic world of recurrent networks, which use feedback between neurons for amplification, memory, attention, oscillations, and more!SHOW ALL SYLLABUSSHOW ALL3 videos (Total 72 min), 2 readings, 1 quizSee All

3 hours to complete

Networks that Learn: Plasticity in the Brain & Learning (Rajesh Rao)

This module investigates models of synaptic plasticity and learning in the brain, including a Canadian psychologist’s prescient prescription for how neurons ought to learn (Hebbian learning) and the revelation that brains can do statistics (even if we ourselves sometimes cannot)! The next two lectures explore unsupervised learning and theories of brain function based on sparse coding and predictive coding.4 videos (Total 86 min), 2 readings, 1 quizSee All

WEEK8

3 hours to complete

Learning from Supervision and Rewards (Rajesh Rao)

In this last module, we explore supervised learning and reinforcement learning. The first lecture introduces you to supervised learning with the help of famous faces from politics and Bollywood, casts neurons as classifiers, and gives you a taste of that bedrock of supervised learning, backpropagation, with whose help you will learn to back a truck into a loading dock.The second and third lectures focus on reinforcement learning. The second lecture will teach you how to predict rewards à la Pavlov’s dog and will explore the connection to that important reward-related chemical in our brains: dopamine. In the third lecture, we will learn how to select the best actions for maximizing rewards, and examine a possible neural implementation of our computational model in the brain region known as the basal ganglia. The grand finale: flying a helicopter using reinforcement learning!

Being able to start Deep reinforcement-learning research

Being able to start Deep reinforcement-learning engineering role

Understand modern state-of-the-art Deep reinforcement-learning knowledge

Understand Deep reinforcement-learning knowledge

Hello I am Nitsan Soffair, A Deep RL researcher at BGU.

In my Deep reinforcement-learning course you will learn the newest state-of-the-art Deep reinforcement-learning knowledge.

You will do the following

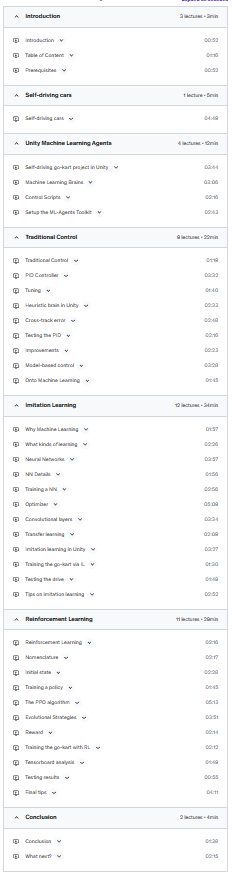

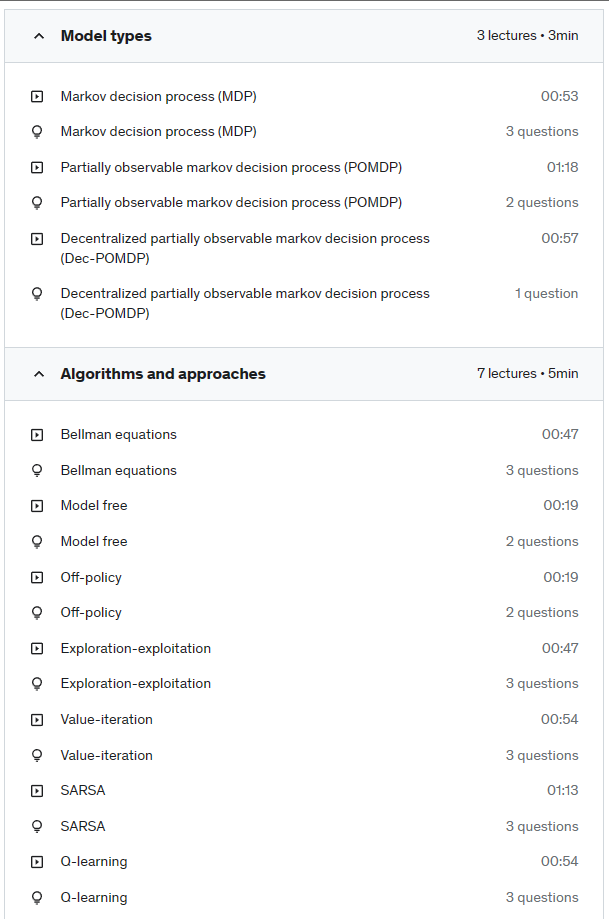

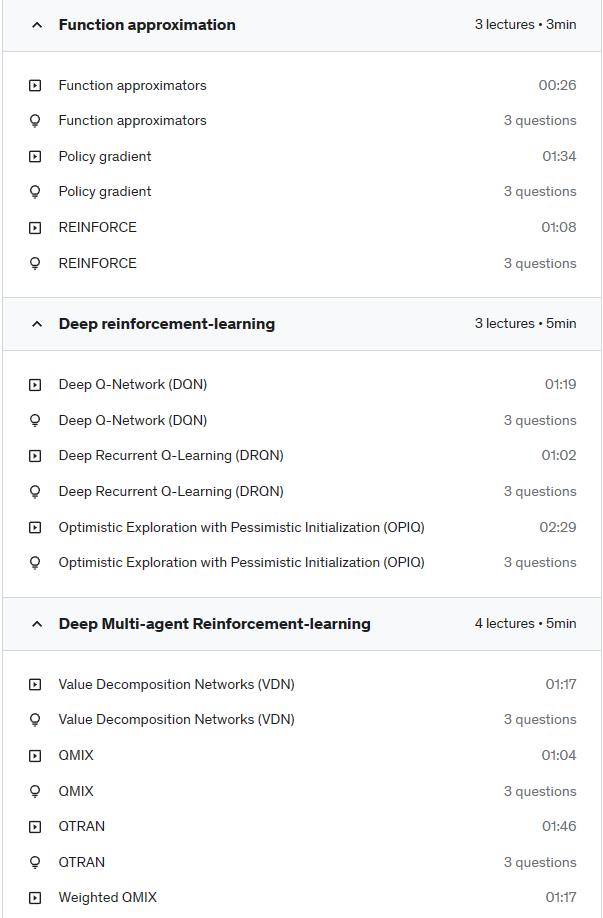

Syllabus

Resources

Configure and use the Unity Machine Learning Agents toolkit to solve physical problems in simulated environments

Understand the concepts of neural networks, supervised and deep reinforcement learning (PPO)

Apply ML control techniques to teach a go-kart to drive around a track in Unity

WARNING: take this class as a gentle introduction to machine learning, with particular focus on machine vision and reinforcement learning. The Unity project provided in this course is now obsolete because the Unity ML agents library is still in its beta version and the interface keeps changing all the time! Some of the implementation details you will find in this course will look different if you are using the latest release, but the key concepts and the background theory are still valid. Please refer to the official migrating documentation on the ml-agents github for the latest updates.

Learn how to combine the beauty of Unity with the power of Tensorflow to solve physical problems in a simulated environment with state-of-the-art machine learning techniques.

We study the problem of a go-kart racing around a simple track and try three different approaches to control it: a simple PID controller; a neural network trained via imitation (supervised) learning; and a neural network trained via deep reinforcement learning.

Each technique has its strengths and weaknesses, which we first show in a theoretical way at simple conceptual level, and then apply in a practical way. In all three cases the go-kart will be able to complete a lap without crashing.

We provide the Unity template and the files for all three solutions. Then see if you can build on it and improve performance further more.

Buckle up and have fun!