Course content

- Getting started in Google Colab

- The ecosystem of Google Colab

- Introduction to PyTorch

- Working with datasets

- Recognizing handwritten digits

- Transfer learning for object recognition

- Recognizing fashion items

- Deep learning best practices

Introduction to Machine Learning and Deep Learning

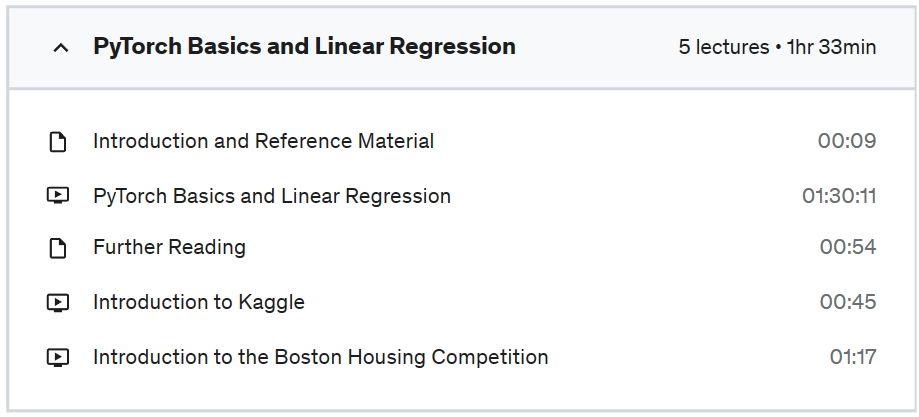

PyTorch Basics: Tensors & Gradients

Linear Regression with PyTorch

Working with Image Data in PyTorch

Image Classification using Convolutional Neural Networks

Residual Networks, Data Augmentation and Regularization Techniques

Generative Adverserial Networks

“Deep Learning with PyTorch for Beginners is a series of courses covering various topics like the basics of Deep Learning, building neural networks with PyTorch, CNNs, RNNs, NLP, GANs, etc. This course is Part 1 of 5.

Topics Covered:

1. Introduction to Machine Learning & Deep Learning

2. Introduction on how to use Jovian platform

3. Introduction to PyTorch: Tensors & Gradients

4. Interoperability with Numpy

5. Linear Regression with PyTorch

– System setup

– Training data

– Linear Regression from scratch

– Loss function

– Compute gradients

– Adjust weights and biases using gradient descent

– Train for multiple epochs

– Linear Regression using PyTorch built-ins

– Dataset and DataLoader

– Using nn.Linear

– Loss Function

– Optimizer

– Train the model

– Commit and update the notebook

7. Sharing Jupyter notebooks online with Jovian

Show less

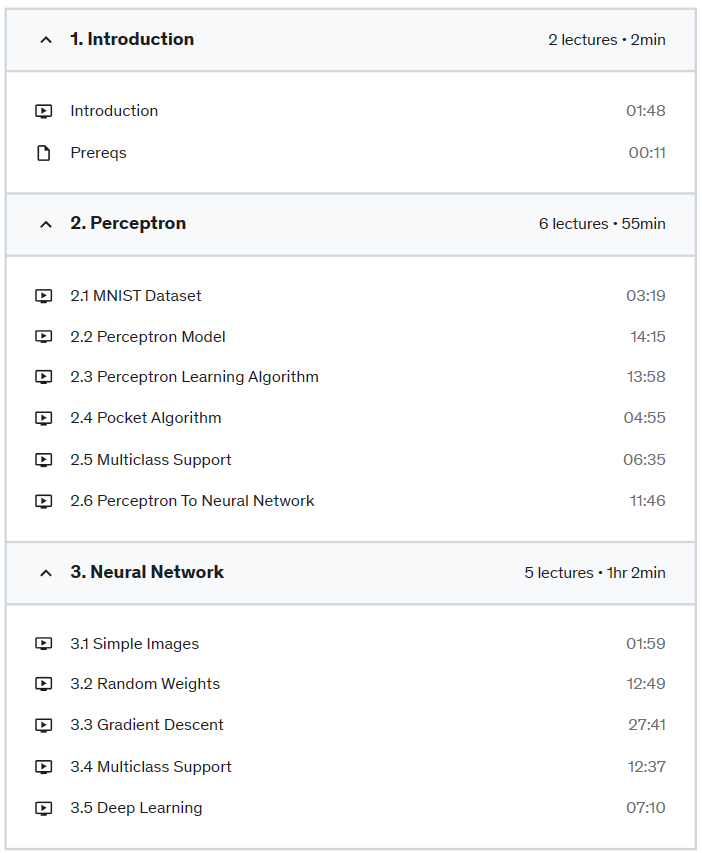

Neural Network Fundamentals

Wanna understand deep learning and neural networks so well, you could code them from scratch? In this course, we’ll do exactly that.

The course starts by motivating and explaining perceptrons, and then gradually works its way toward deriving and coding a multiclass neural network with stochastic gradient descent that can recognize hand-written digits from the famous MNIST dataset.

Course Goals

This course is all about understanding the fundamentals of neural networks. So, it does not discuss TensorFlow, PyTorch, or any other neural network libraries. However, by the end of this course, you should understand neural networks so well that learning TensorFlow and PyTorch should be a breeze!

Challenges

In this course, I present a number of coding challenges inside the video lectures. The general approach is, we’ll discuss an idea and the theory behind it, and then you’re challenged to implement the idea / algorithm in Python. I’ll discuss my solution to every challenge, and my code is readily available on github.

Prerequisites

In this course, we’ll be using Python, NumPy, Pandas, and good bit of calculus. ..but don’t let the math scare you. I explain everything in great detail with examples and visuals.

If you’re rusty on your NumPy or Pandas, check out my free courses Python NumPy For Your Grandma and Python Pandas For Your Grandpa.

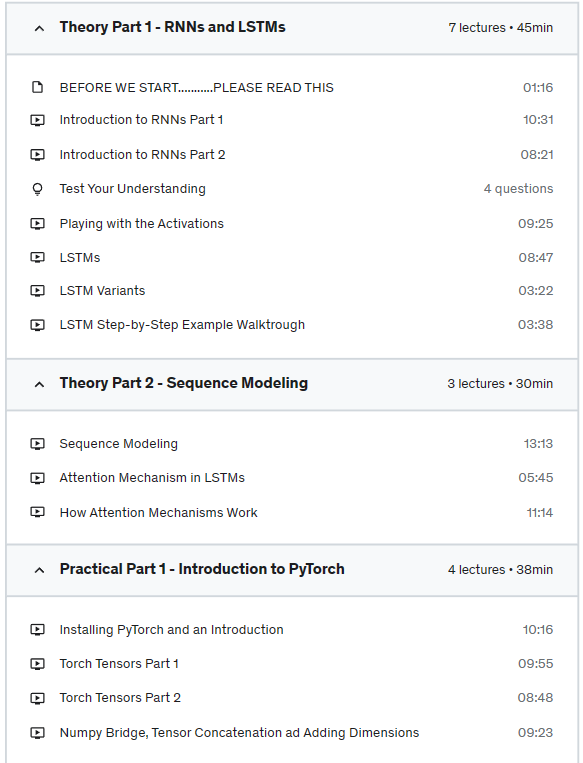

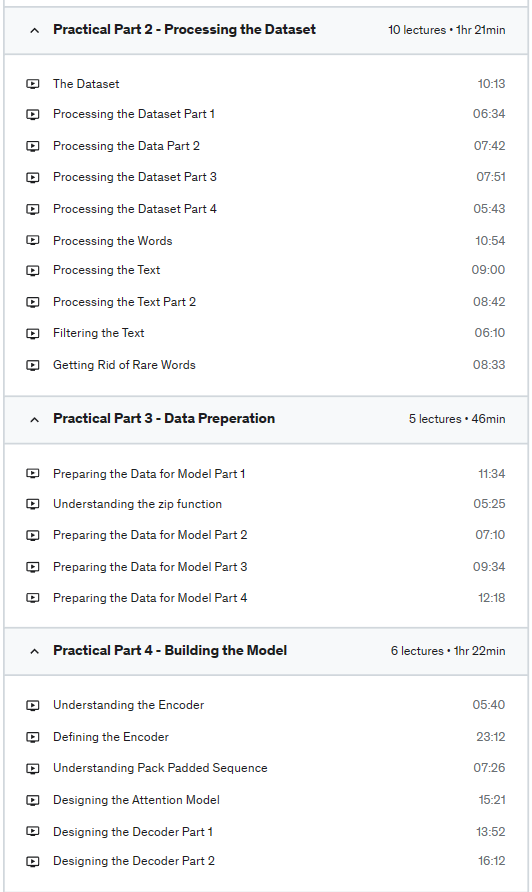

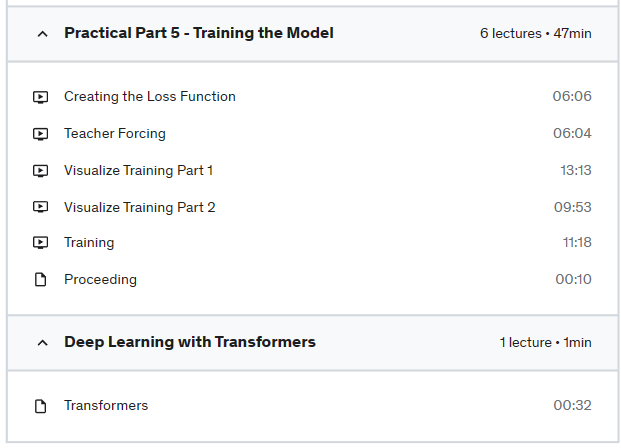

Understand the theory behind Sequence Modeling

Understand the theory of how Chatbots work

Undertand the theory of how RNNs and LSTMs work

Get Introduced to PyTorch

Implement a Chatbot in PyTorch

Undertand the theory of different Sequence Modeling Applications

In this course, you’ll learn the following:

We will first cover the theoretical concepts you need to know for building a Chatbot, which include RNNs, LSTMS and Sequence Models with Attention.

Then we will introduce you to PyTorch, a very powerful and advanced deep learning Library. We will show you how to install it and how to work with it and with PyTorch Tensors.

Then we will build our Chatbot in PyTorch!

Please Note an important thing: If you don’t have prior knowledge on Neural Networks and how they work, you won’t be able to cope well with this course. Please note that this is not a Deep Learning course, it’s an Application of Deep Learning, as the course names implies (Applied Deep Learning: Build a Chatbot). The course level is Intermediate, and not Beginner. So please familiarize yourself with Neural Networks and it’s concepts before taking this course. If you are already familiar, then your ready to start this journey!

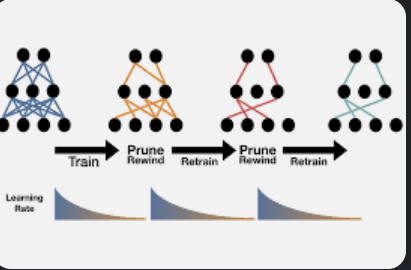

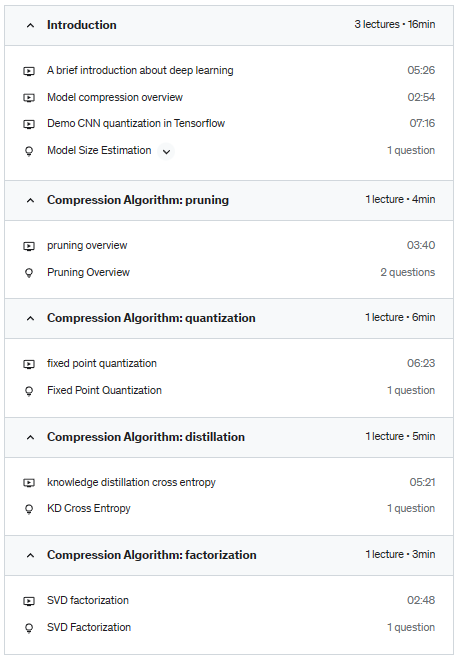

Practice model compression using Tensorflow, Pytorch, ONNX, and TensorRT

Serve compressed model in AWS Sagemaker

Understand model compression algorithms, pruning, quantization, distillation and factorization

Conduct literature survey about most recent compression techniques

This course is intended to provide learners with an in-depth understanding of techniques used in compressing deep learning models. The techniques covered in the course include pruning, quantization, knowledge distillation, and factorization, all of which are essential for anyone working in the field of deep learning, particularly those focused on computer vision and natural language processing. These techniques should be generally applicable to all deep learning models.

One of the primary objectives of this course is to provide advanced content that is updated with the latest algorithms. This includes product quantization and its variants, tensor factorization, and other cutting-edge techniques that are rapidly evolving in the field of deep learning. To ensure learners are equipped with the knowledge they need to succeed in this field, the course will summarize these techniques based on academic papers, while avoiding an emphasis on experiment result details. It’s worth noting that leaderboard results are updated frequently, and new models may require compression. As a result, the course will focus on the technical aspects of these techniques, helping learners understand what happens behind the scenes.

Upon completion of the course, learners will feel confident in their ability to read news, blogs, and academic papers related to model compression. You will be encouraged to apply these techniques to your own work and share the knowledge with others.

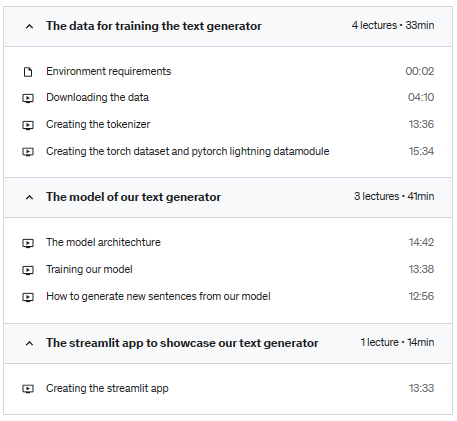

Learn how do create torch datasets and pytorch lightning data modules

Learn how the simplest version of a text generator is put together, and what the training objective

Learn how to load pretrained models and sample new text from them

Learn how to create an app with streamlit to showcase your text generator

In this course, the primary objective is to develop a text generator from scratch using next-token prediction. To accomplish this, we will utilize an opensource dataset called bookcorpus. By the end of this course, we will have a better understanding of how to build a text generator and implement the necessary components for training a model and generating text.

One of the first things we will learn is how to load data into our model. We will explore various techniques for batching data and discuss why certain batching methods are better than others. We will also cover how to preprocess and clean the data to ensure that it is suitable for training our model.

After loading and preprocessing the data, we will delve into the process of training a model. We will learn about the architecture of a typical text generation model and the different types of layers that can be used. We will also cover topics such as loss functions and optimization algorithms and explore the impact that these have on our model’s performance.

Once we have trained our model, we will move on to generating text using our newly trained text generator. We will explore various approaches for generating text, such as random sampling, greedy decoding, and beam search. We will also discuss how to tune the hyperparameters of our model to achieve better results.

Finally, we will create a small app that can run in the browser to showcase our text generator. We will discuss various front-end frameworks such as React and Vue.js and explore how to integrate our model into a web application.

Overall, this course will provide us with a comprehensive understanding of how to build a text generator from scratch and the tools and techniques required to accomplish this task.

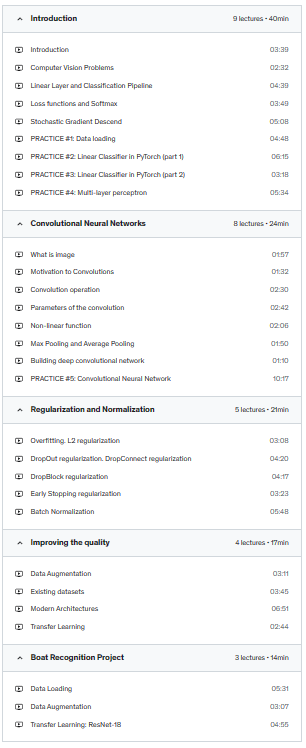

Convolutional Neural Networks

Image Processing

Advance Deep Learning Techniques

Regularization, Normalization

Transfer Learning

Dear friend, welcome to the course “Modern Deep Convolutional Neural Networks”! I tried to do my best in order to share my practical experience in Deep Learning and Computer vision with you.

The course consists of 4 blocks:

If you don’t understand something, feel free to ask equations. I will answer you directly or will make a video explanation.

Prerequisites: