Advance knowledge at NLP

Understand NLP

Advance knowledge at DL

Understand DL

Requirements

- Motivation

- Interset

- Mathematical approach

Description

I am Nitsan Soffair, A Deep RL researcher at BGU.

In this course you will learn NLP with vector spaces.

You will

- Get knowledge of

- Sentiment analysis with logistic regression

- Sentiment analysis with naive bayes

- Vector space models

- Machine translation and document search

- Validate knowledge by answering a quiz by the end of each lecture

- Be able to complete the course by ~2 hours.

Syllabus

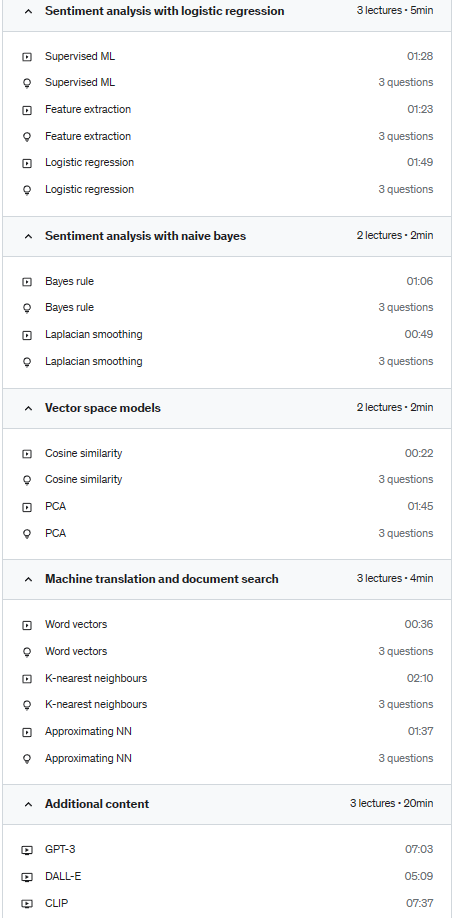

- Sentiment analysis with logistic regression

- Supervised ML

- Feature extraction

- Logistic regression

- Sentiment analysis with naive bayes

- Bayes rule

- Laplacian smoothing

- Vector space models

- Euclidean distance

- Cosine similarity

- PCA

- Machine translation and document search

- Word vectors

- K-nearest neighbours

- Approximating NN

- Additional content

- GPT-3

- DALL-E

- CLIP

Vector space model or term vector model is an algebraic model for representing text documents (and any objects, in general) as vectors of identifiers (such as index terms). It is used in information filtering, information retrieval, indexing and relevancy rankings. Its first use was in the SMART Information Retrieval System.

Supervised learning (SL) is the machine learning task of learning a function that maps an input to an output based on example input-output pairs. It infers a function from labeled training data consisting of a set of training examples. In supervised learning, each example is a pair consisting of an input object (typically a vector) and a desired output value (also called the supervisory signal). A supervised learning algorithm analyzes the training data and produces an inferred function, which can be used for mapping new examples. An optimal scenario will allow for the algorithm to correctly determine the class labels for unseen instances. This requires the learning algorithm to generalize from the training data to unseen situations in a “reasonable” way (see inductive bias). This statistical quality of an algorithm is measured through the so-called generalization error.

The parallel task in human and animal psychology is often referred to as concept learning.

Resources

- Wikipedia

- Coursera

Who this course is for:

- Anyone intersted in NLP

- Anyone intersted in AI

Course content